Washington — The US military has warned AI company Anthropic that it could lose a major government contract if it does not remove certain safety limits from its AI system.

Defense Secretary Pete Hegseth reportedly gave Anthropic CEO Dario Amodei a deadline to agree to the Pentagon’s terms. The issue centers on the company’s AI chatbot, Claude, and how the military can use it. The contract is worth up to $200 million.

What the Pentagon Wants

The Department of Defense (DoD) wants full access to Claude for military work. Officials say the military should be able to use AI tools for all lawful purposes without built-in limits.

Last year, the Pentagon signed AI contracts with four major companies:

- Anthropic

- OpenAI

- xAI

Each deal can be worth up to $200 million.

Anthropic was the first company approved to use its AI inside classified military systems. The Pentagon has also added AI tools to its internal network called GenAI.mil.

Why Anthropic Is Saying No

Anthropic says some uses of AI are too dangerous. The company has set clear limits. It does not want its AI to be used for:

- Fully autonomous weapons that can attack without human control

- Mass surveillance of US citizens

- Tracking or silencing public dissent

Dario Amodei has warned that powerful AI systems could be misused if there are no safety rules.

Even after meeting with Pentagon leaders, reports say he did not change his position.

Possible Actions Against Anthropic

Defense officials have said they could take strong steps if Anthropic refuses to agree. These steps may include:

- Canceling the contract

- Calling the company a “supply chain risk”

- Using the Defense Production Act to increase government control over AI tools

Other AI companies have already agreed to the Pentagon’s terms. OpenAI has allowed a version of ChatGPT to be used for military work. xAI’s chatbot Grok is also being added to defense networks.

This leaves Anthropic as the only major AI company resisting the new rules.

A Bigger Debate About AI and War

The issue is part of a larger debate about how AI should be used in national security.

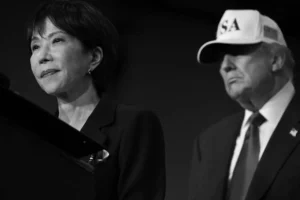

President Donald Trump’s administration has pushed for faster AI use in the military. Officials say the US must lead in the global AI race.

Anthropic has often supported stronger AI regulation, especially during the time of President Joe Biden. This has created political tension.

Experts say this fight could shape the future of AI in the military.

Why This Matters

This dispute is not just about one contract. It is about who controls powerful AI systems.

- Should companies set limits on how their technology is used?

- Or should the government decide how to use AI for defense?

The outcome of this standoff could affect future military AI projects in the United States and around the world.

As AI becomes more powerful, questions about safety, control, and responsibility are becoming more urgent than ever.

Megan Davies is a reporter for White Pine Tribune. After graduating from the University of Toronto, Megan got an internship at the CBC News and worked as a reporter and editor. Megan has also worked as a reporter for Global Toronto. Megan covers economy and community events for White Pine Tribune.